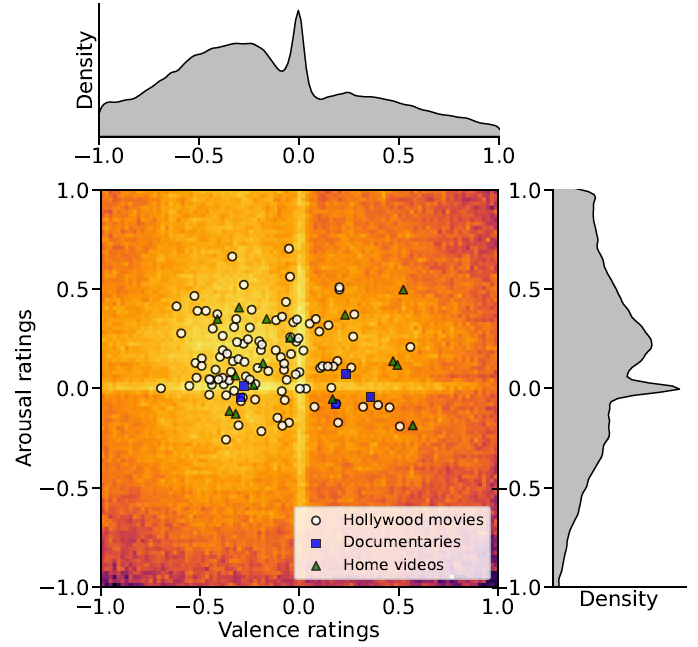

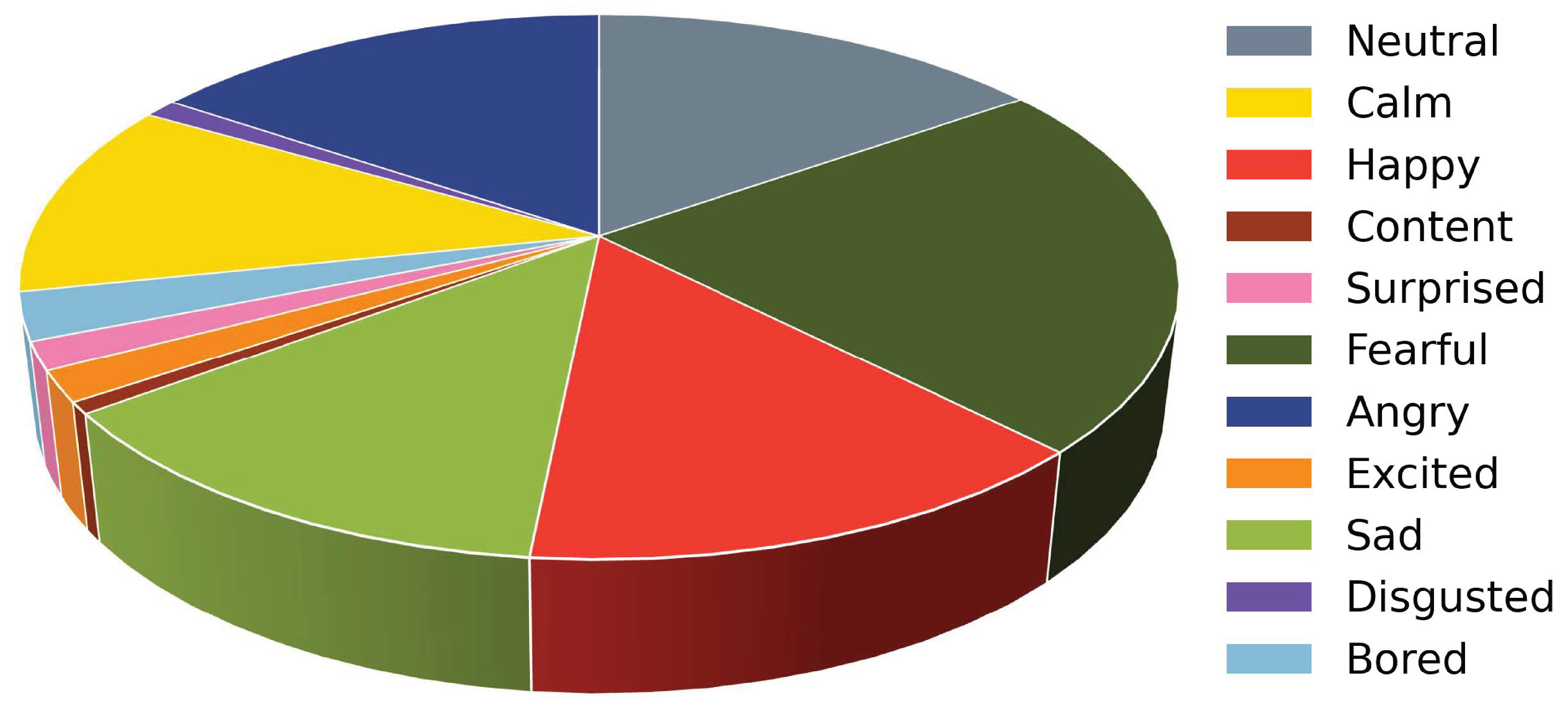

The ability to perceive the emotions of others is incredibly important when navigating and understanding the social world around us. To understand this visual perceptual mechanism, previous studies have focused on face processing leading many previous datasets to collect face-centric data. Recent research has found that the visual system also utilizes background scene context to modulate and assign perceived emotion. Similarly, “in-the-wild” datasets have been created to include contextual information, such as CAER and EMOTIC. However, these datasets only track categorical emotions, and ignore dimensional ratings (i.e. valence and arousal) or collect ratings on static images. In this project, we propose BEAT: The Berkeley Emotion and Affect Tracking Dataset, the first video-based dataset that contains both categorical and continuous emotion annotations for a large number of videos (124 videos total). BEAT provides more insights into human emotion perception by providing both categorical and dimensional ratings for individual videos. Additionally, BEAT can help researchers better understand how emotion is processed temporally, as it is in the real world. Additionally, compared to other datasets, a large number of annotators (n = 245) were recruited to avoid idiosyncratic biases. BEAT can also be beneficial for artificial intelligence (AI) models. Using unbiased and multi-modality annotations, AI models trained on BEAT can be more robust and fair. Finally, we also release a new AI benchmark for emotion recognition multi-tasking. The BEAT dataset will help increase our understanding about how humans perceive the emotions of others in natural scenes.